Wageningen University & Research | FEM-31806 | Models for Ecological Systems | FEM | PPS | WEC

NUCOM - Day 4

First make a copy of the script you created on Day 2 to implemented the model (or, copy the solution of the implementation of the model from the solution to day 2, and then continue with the exercises below.

Aims

Aim 1: Sensitivity

Probably you have already separated parameters between those that are true constants (e.g. molar weights, physical properties etc.), those that are more or less invariable or are measured with great precision and therefore hard coded in the parameter list, and those that are highly uncertain and/or can vary a lot in different scenarios. The latter are in this course typically defined per scenario and given as input arguments to the function creating a named vector with parameter values, or as a defined list in input scenario-specific input files for other models.

Select three parameters for sensitivity analysis. Run both a sensitivity analysis (sensu stricto), and also an elasticity analysis, which is a normalized version of sensitivity (see textbook and lectures for differences). Run the model three times (150 years) but with different parameter values: at the default parameter value, at the default parameter value -10%, and at the default parameter value +10%. Study the effects of these parameter values for the state variables: BC1 and BC2. Visualize by plotting the results. For visualization you can first best plot the state variable(s) of interest against time (possibly with multiple scenarios in a single plot; a separate line for each scenario). Moreover, you can opt for a barplot showing the sensitivity and elasticity index values for the parameters.

Aim 2: Calibration

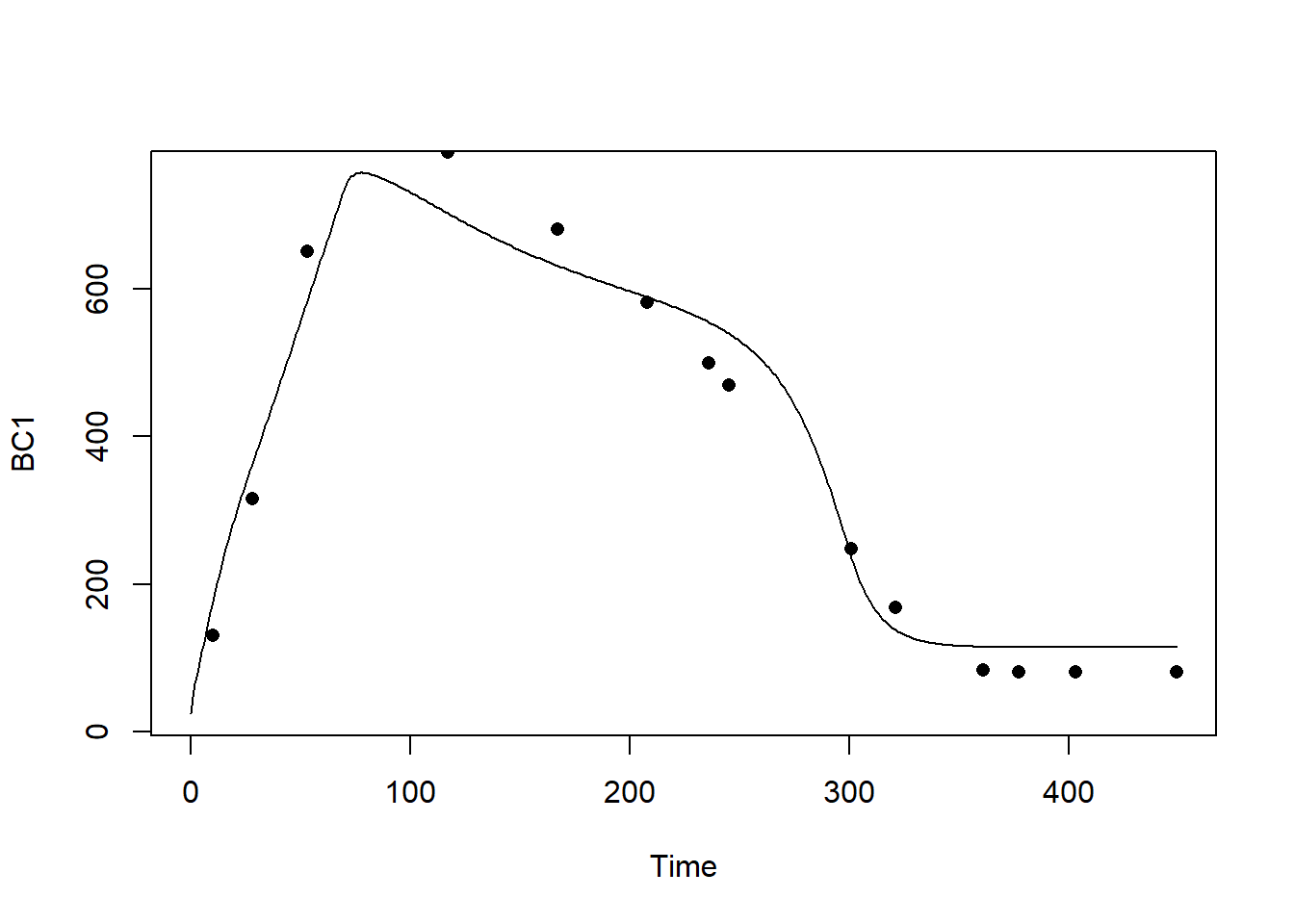

- Calibrate the model for three critical and uncertain parameters: NCONC1, GMAX1 and MORT1. Use the data sets provided on Brightspace (NUCOM.csv). Calibrate the model for state variable BC1, using the numerical optimization method as developed in Template 6. Visualize the implications for the model: plot the state variables against time.

Aim 3: Validation

Give the options to validate your model.

What data would you need?

Answers

Download here the code as shown on this page in a separate .r file.

Sensitivity

Parameters

The function returning a named vector with parameter values:

ParmsNUCOM <- function(

### Inputs from outside into the system

# Nitrogen from atmospheric deposition

NDEPCO = 2.0, # gN / (m2 * year)

# Imported carbon from seeds from outside the considered field

SEEDC1 = 10.0, # gC / (m2 year)

SEEDC2 = 10.0, # gC / (m2 year)

# Imported nitrogen from seeds from outside the considered field

SEEDN1 = 0.2, # gN / (m2 year)

SEEDN2 = 0.2, # gN / (m2 year)

### System parameter values

# Nitrogen concentration in species 1 and 2

NCONC1 = 0.02, # gN / gC

NCONC2 = 0.02, # gN / gC

# Maximum growth rate of species 1 and 2

GMAX1 = 300.0, # gC / (m2 year)

GMAX2 = 900.0, # gC / (m2 year)

# Relative mortality rates of species 1 and 2

MORT1 = 0.6, # (g/year) / g or 1/year

MORT2 = 0.9, # (g/year) / g or 1/year

# Relative decay rates of species 1 and 2

DECAY1 = 0.1, # (g/year) / g or 1/year

DECAY2 = 0.3, # (g/year) / g or 1/year

# Carbon en nitrogen concentrations of the micro-organisms

CCONMO = 0.5, # gC / g biomass

NCONMO = 0.05, # gN / g biomass

# Assimilation efficiency of the micro-organisms

EFFMO = 0.2 # gCbuilt in into Biomass / gC liberated from soil organic matter

) {

# Gather parameters in a named vector

y <- c(NDEPCO = NDEPCO,

SEEDC1 = SEEDC1, SEEDC2 = SEEDC2,

SEEDN1 = SEEDN1, SEEDN2 = SEEDN2,

NCONC1 = NCONC1, NCONC2 = NCONC2,

GMAX1 = GMAX1, GMAX2 = GMAX2,

MORT1 = MORT1, MORT2 = MORT2,

DECAY1 = DECAY1, DECAY2 = DECAY2,

CCONMO = CCONMO,

NCONMO = NCONMO,

EFFMO = EFFMO)

# Return

return(y)

}Initial conditions

The function returning a named vector with initial values of the 8 state variables:

- Biomass Carbon of two vegetation species (BC1, BC2);

- Biomass Nitrogen of two vegetation species (BN1, BN2);

- Soil Carbon coming from the two plant species (SC1, SC2);

- Soil Nitrogen coming from the two plant species (SN1, SN2):

InitNUCOM <- function(

# For the vegetation

BC1 = 25.0, # gC in Veg.1 / m2

BC2 = 25.0, # gC in Veg.2 / m2

BN1 = 0.5, # gN in Veg.1 / m2

BN2 = 0.5, # gN in Veg.2 / m2

# For the soil

SC1 = 25.0, # gC from Veg.1 / m2

SC2 = 25.0, # gC from Veg.2 / m2

SN1 = 0.5, # gN from Veg.1 / m2

SN2 = 0.5 # gN from Veg.2 / m2

){

# Gather initial conditions in a named vector; given names are names for the state variables in the model

y <- c(BC1 = BC1, BC2 = BC2,

BN1 = BN1, BN2 = BN2,

SC1 = SC1, SC2 = SC2,

SN1 = SN1, SN2 = SN2)

# Return

return(y)

}Differential equations

The function computing the rates of change of the state variables with respect to time:

RatesNUCOM <- function(t, y, parms) {

# use the with() function to be able to access the parameters and states easily

# remember that the with() function is with(data, expr), where

# - 'data' is ideally a list with named elements

# thus: 'y' and 'parms' are combined using function c and converted using function as.list

# - 'expr' is an expression (i.e. code) that is evaluated

# this can span multiple lines of code when embraced with curly brackets {}

with(

as.list(c(y, parms)),{

### Optional: forcing functions: get value of the driving variables at time t (if any)

### Optional: auxiliary equations

# Litter production of both species in terms of Carbon and Nitrogen

LITPC1 <- BC1 * MORT1

LITPC2 <- BC2 * MORT2

LITPN1 <- BN1 * MORT1

LITPN2 <- BN2 * MORT2

# Decomposition of soil organic matter in terms of carbon and nitrogen

# DECOM is the net carbon production, lost as CO2-C from the system

DECOM1 <- SC1 * DECAY1 * (1.0 - EFFMO)

DECOM2 <- SC2 * DECAY2 * (1.0 - EFFMO)

# Definition of details of overall input-output rate equations

# Mineralisation of Nitrogen

MINER1 <- DECAY1 * SC1*( (SN1/SC1) - (NCONMO/CCONMO)*EFFMO )

MINER2 <- DECAY2 * SC2*( (SN2/SC2) - (NCONMO/CCONMO)*EFFMO )

MINTOT <- MINER1 + MINER2

# Nitrogen supply to (N-deposition) the system

NDEP <- NDEPCO

NAVAIL <- NDEP + MINER1 + MINER2

# Growth rates of both species

NLIMG1 <- ( BC1/(BC1 + BC2) ) * NAVAIL/NCONC1

LLIMG1 <- ( BC1/(BC1 + BC2) ) * GMAX1

ACTGC1 <- pmin(NLIMG1 , LLIMG1)

NLIMG2 <- ( BC2/(BC1 + BC2) ) * NAVAIL/NCONC2

LLIMG2 <- ( BC2/(BC1 + BC2) ) * GMAX2

ACTGC2 <- pmin(NLIMG2 , LLIMG2)

# Therefore, CO2 evolution is calculated per species and in total as:

CO21DT <- DECOM1

CO22DT <- DECOM2

# TOTCO2 = CO21 + CO22

# Nitrogen uptake rates of both species

NUPT1 <- ACTGC1 * NCONC1

NUPT2 <- ACTGC2 * NCONC2

# Nitrogen loss (Leaching) from the system

LEACH <- NAVAIL - NUPT1 - NUPT2

### Rate equations

DBC1DT <- ACTGC1 - LITPC1 + SEEDC1

DBC2DT <- ACTGC2 - LITPC2 + SEEDC2

DBN1DT <- NUPT1 - LITPN1 + SEEDN1

DBN2DT <- NUPT2 - LITPN2 + SEEDN2

DSC1DT <- LITPC1 - DECOM1

DSC2DT <- LITPC2 - DECOM2

DSN1DT <- LITPN1 - MINER1

DSN2DT <- LITPN2 - MINER2

### Gather all rates of change in a vector

# - the rates should be in the same order as the states (as specified in 'y')

# - it can be a named vector, but does not need to be

RATES <- c(DBC1DT = DBC1DT,

DBC2DT = DBC2DT,

DBN1DT = DBN1DT,

DBN2DT = DBN2DT,

DSC1DT = DSC1DT,

DSC2DT = DSC2DT,

DSN1DT = DSN1DT,

DSN2DT = DSN2DT)

### Optional: get in/out flow used to compute mass balances (or set MB <- NULL)

# not included here (thus here use MB <- NULL), see template 3

MB <- NULL

### Optional: gather auxiliary variables that should be returned (or set AUX <- NULL)

# - this should be a named vector or list!

AUX <- NULL

# Return result as a list

# - the first element is a vector with the rates of change (in the same order as 'y')

# - all other elements are (optional) extra output, which should be named

outList <- list(c(RATES, # the rates of change of the state variables (same order as 'y'!)

MB), # the rates of change of the mass balance terms (or NULL)

AUX) # optional additional output per time step

return(outList)

})

}With these 3 functions now defined, the NUCOM mini-model has been

implemented in R code, and the model can now numerically be solved using

the ode function from the deSolve package.

Here, let’s solve the model for 3 different values of parameter

NCONC1: first for its default value (0.02; solved model

stored in states), then when increasing it by 10% (solved

model stored in statesHI), and then when decreasing it with

10% (solved model stored in statesLO).

states <- ode(y = InitNUCOM(),

times = seq(from=0, to=150, by=1),

func = RatesNUCOM,

parms = ParmsNUCOM(NCONC1 = 0.02),

method = "ode45")

statesHI <- ode(y = InitNUCOM(),

times = seq(from=0, to=150, by=1),

func = RatesNUCOM,

parms = ParmsNUCOM(NCONC1 = 1.1*0.02),

method = "ode45")

statesLO <- ode(y = InitNUCOM(),

times = seq(from=0, to=150, by=1),

func = RatesNUCOM,

parms = ParmsNUCOM(NCONC1 = 0.9*0.02),

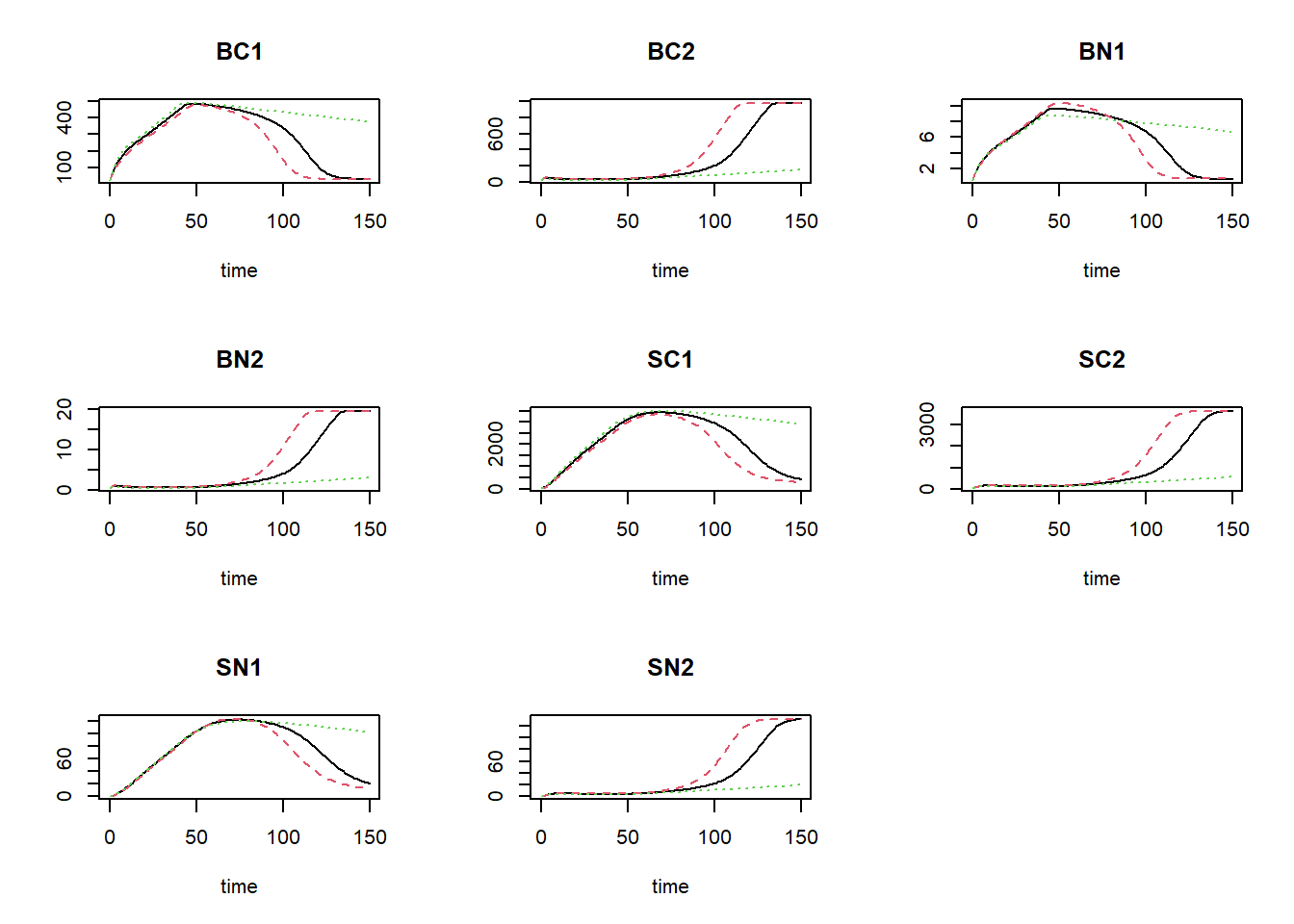

method = "ode45")We can show these different scenarios:

plot(states, statesHI, statesLO)

where the black line is the default value for NCONC1,

the red line is the high value (10% increase), and the green line shows

the simulation for the value reduced by 10%.

We can now calculate the sensitivity index (SI) and elasticity index

(EI) of the parameter NCONC1 on state BC1 at

the end of the simulation:

deltaState = statesHI[nrow(statesHI),"BC1"] - statesLO[nrow(statesHI),"BC1"]

deltaParm = 0.1*0.02

SI = deltaState / (2*deltaParm)

SI## BC1

## -84468.17EI = (deltaState/states[nrow(states),"BC1"]) / ((2*deltaParm) / 0.02)

EI## BC1

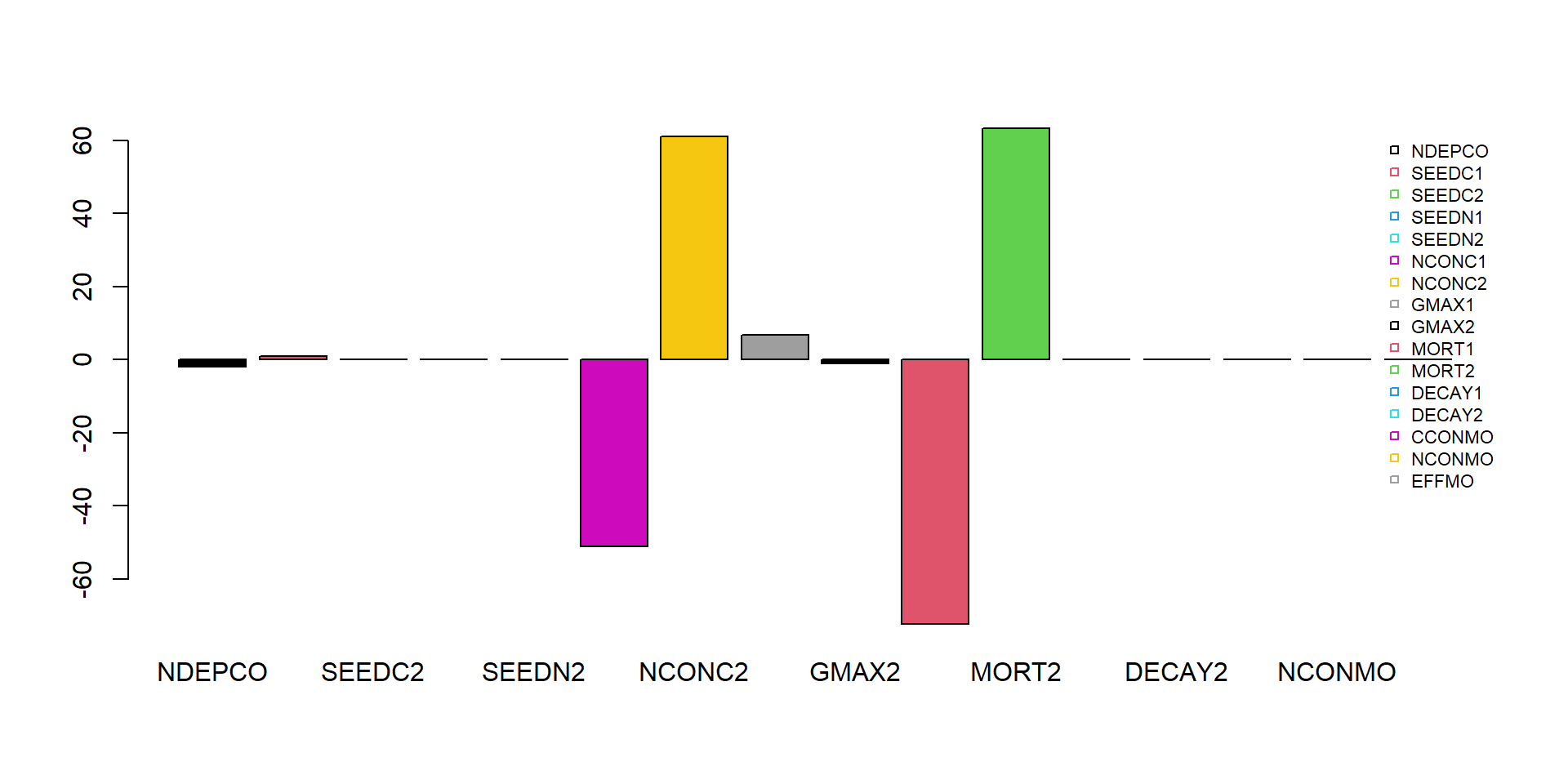

## -51.1956This approach can be repeated for the other parameters and both state variables. Repeating the same procedure for all parameters and all state variables yields the following elasticity indices:

### Settings for sensitivity analysis

# Settings for integration: initial conditions, parameters, output times and method

inits <- InitNUCOM()

parms <- ParmsNUCOM()

times <- seq(from=0, to=150, by=1)

method <- "ode45"

# the parameters to compute sensitivity indices for

sensiParms <- names(parms)

# the state for which we want the sensitivity index

stateName <- "BC1"

# the fraction by which each parameter is changed

changeFraction <- 0.1 # 10%

# time(s) for which we want to compute the sensitivity/elasticity indices

evalTime <- max(times)

### Perform sensitivity analysis

# Create data.frame to hold the output for each state in "sensiParms" and time in "evalTime" (stateDiff)

stateDiff <- data.frame(time = evalTime)

stateDiff[,stateName] <- NA # Column for the value of the state given the unchanged parameters

stateDiff[,sensiParms] <- NA # Column(s) for the difference in state values given changes in parameters

# Create empty vectors to hold the parameter differences (parmDiff)

parmDiff <- parms[sensiParms] # this makes of copy of the named parameter vector

parmDiff[] <- NA # This sets all elements within the vector to value NA

# Update the times vector with the value of evalTime (so that any evalTime is possible)

times <- sort(unique(c(times, evalTime)))

# In a 'for'-loop with iterator "i" (which values specfied by 'sensiParms'):

# - create 2 copies of 'parms': called 'paramsLo' and 'paramsHi'

# - reduce the value of the i-th parameter in paramsLo with 'changeFraction'

# - increase the value of the i-th parameter in paramsHi with 'changeFraction'

# - solve the ODE model for both parameter sets (they are identical except for the i-th parameter)

# - get the values of the state after 'evalTime' time units and store the difference in 'stateDiff'

# - store the difference between the elevated and reduced parameter value in 'parmDiff'

for(i in sensiParms) {

# Create copies of parms

paramsLo <- parms

paramsHi <- parms

# Reduce/increase the value of the i-th parameter

paramsLo[i] <- (1 - changeFraction) * parms[i]

paramsHi[i] <- (1 + changeFraction) * parms[i]

# Solve the ODE model for both sets of parameters

states_lo <- ode(y = inits,

times = times,

parms = paramsLo,

func = RatesNUCOM,

method = method)

states_hi <- ode(y = inits,

times = times,

parms = paramsHi,

func = RatesNUCOM,

method = method)

# Retrieve the values of the state variable at evalTime time units

subsetLo <- subset(as.data.frame(states_lo), time %in% evalTime)[[stateName]]

subsetHi <- subset(as.data.frame(states_hi), time %in% evalTime)[[stateName]]

# Compute the differences and store in stateDiff

stateDiff[,i] <- subsetHi - subsetLo

# Store the difference between the elevated and reduced parameter value in the i-th element of parmDiff

parmDiff[i] <- paramsHi[i] - paramsLo[i]

}

# Add the value of the state variable given the (unchanged!) parameter values

states <- ode(y = inits,

times = times,

parms = parms,

func = RatesNUCOM,

method = method)

stateDiff[,stateName] <- subset(as.data.frame(states), time %in% evalTime)[[stateName]]

### Compute sensitivity indices

SI <- stateDiff # make copy of data.frame stateDiff

for(i in sensiParms) {

SI[,paste("SI_d",i,sep="_")] <- stateDiff[,i] / parmDiff[i] # add index as new column

}We can now inspect the sensitivity index:

SI## time BC1 NDEPCO SEEDC1 SEEDC2 SEEDN1 SEEDN2 NCONC1 NCONC2 GMAX1 GMAX2 MORT1 MORT2 DECAY1 DECAY2

## 1 150 32.99822 -12.50112 6.652722 -0.2327224 -0.03769049 -0.03926729 -337.8727 403.0377 43.85381 -6.612128 -477.1301 417.7125 -0.1598449 -0.1604021

## CCONMO NCONMO EFFMO SI_d_NDEPCO SI_d_SEEDC1 SI_d_SEEDC2 SI_d_SEEDN1 SI_d_SEEDN2 SI_d_NCONC1 SI_d_NCONC2 SI_d_GMAX1 SI_d_GMAX2 SI_d_MORT1

## 1 -0.1167409 0.1031849 0.1638643 -31.2528 3.326361 -0.1163612 -0.9422622 -0.9816823 -84468.17 100759.4 0.7308969 -0.03673404 -3976.084

## SI_d_MORT2 SI_d_DECAY1 SI_d_DECAY2 SI_d_CCONMO SI_d_NCONMO SI_d_EFFMO

## 1 2320.625 -7.992245 -2.673368 -1.167409 10.31849 4.096608We can also calculate the elasticity indices, rounded to 5 digits and sorted on decreasing absolute value:

Es <- parms[sensiParms] / SI$BC1 * SI[,paste("SI_d",sensiParms,sep="_")]

Es <- as.numeric(Es)

names(Es) <- sensiParms

round(Es[order(abs(Es),decreasing=TRUE)],5)## MORT1 MORT2 NCONC2 NCONC1 GMAX1 NDEPCO SEEDC1 GMAX2 SEEDC2 EFFMO DECAY2 DECAY1 CCONMO NCONMO SEEDN2

## -72.29634 63.29319 61.06962 -51.19560 6.64488 -1.89421 1.00804 -1.00189 -0.03526 0.02483 -0.02430 -0.02422 -0.01769 0.01563 -0.00595

## SEEDN1

## -0.00571We could use a barplot to show the elasticities:

barplot(Es, col = 1:8, beside = TRUE)

legend(x="topright", horiz=FALSE, bty="n", legend=names(Es), pch=22, cex=0.7, col=1:8)

Calibration

First, load the data from the file NUCOM.csv, omitting the first 2 rows (names, and units), after which we assign the names manually:

dat = read.csv("pathToFileFolder/NUCOM.csv", head=FALSE, sep=',', skip=2)

names(dat) <- c("time","BC1","BC2")Explore the data by showing the header of the file:

head(dat)## time BC1 BC2

## 1 10 131.29 72.774

## 2 28 315.35 96.660

## 3 53 650.20 104.250

## 4 117 785.26 231.940

## 5 167 680.69 339.630

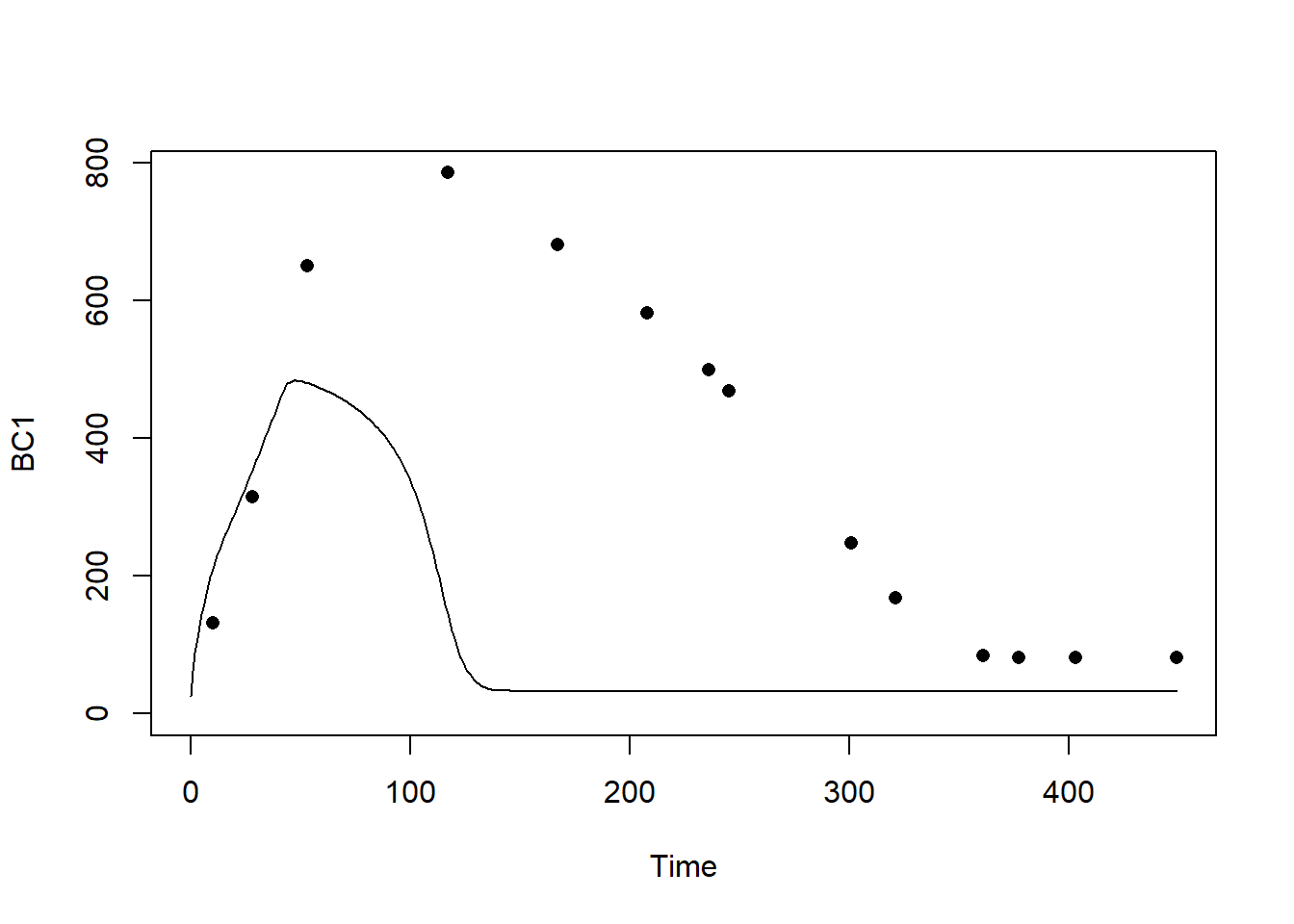

## 6 208 581.41 443.450Let’s first simulate the model with default parameter values and these initial state value, and check how well the modelled predictions for “BC1” agree with the measured values for state “BC1”:

# Simulate with default parameter values

states <- ode(y = InitNUCOM(),

times = seq(from=0, to=max(dat$time), by=1),

parms = ParmsNUCOM(),

func = RatesNUCOM,

method = "ode45")

# Plot

plot(states[,"time"], states[,"BC1"], type="l", xlab="Time", ylab="BC1", ylim=c(0, max(dat$BC1)))

points(dat$time, dat$BC1, pch=16)

We see that the models does not faithfully represent the patterns observed in nature.

In order to calibrate the model, we first have to define a few

functions (predictionError, predRMSE,

transformParameters and calibrateParameters)

that allow us to do the calibration. These functions can be found in

template 6. Note that we do not have to fill something in here: we only

are defining these functions as is, and later when we

call (use) these functions we have to supply them with the

appropriate inputs.

First, function predictionError (copy from template

6):

# Define a function that computes prediction errors

# NOTE: nothing needs to be filled in here, so this function can be used as-is

# (unless there is a need to change it, e.g. change the solver method, or include events etc.)

predictionError <- function(p, # the parameters

y0, # the initial conditions

fn, # the function with rate equation(s)

name, # the name of the state parameter

obs_values, # the measured state values

obs_times, # the time-points of the measured state values

out_time = seq(from = 0, # times for the numerical integration

to = max(obs_times), # default: 0 - max(obs_times)

by = 1) # in steps of 1

) {

# Get out_time vector to retrieve predictions for (combining 'obs_times' and 'out_time')

out_time <- unique(sort(c(obs_times, out_time)))

# Solve the model to get the prediction

pred <- ode(y = y0,

times = out_time,

func = fn,

parms = p,

method = "ode45")

# NOTE: in case of a 'stiff' problem, method "ode45" might result in an error that the max nr of

# iterations is reached. Using method "rk4" (fixed timestep 4th order Runge-Kutta) might solve this.

# Get predictions for our specific state, at times t

pred_t <- subset(pred, time %in% obs_times, select = name)

# Compute errors, err: prediction - obs

err <- pred_t - obs_values

# Return

return(err)

}Second, function predRMSE (copy from template 6):

# Define a function that computes the Root Mean Squared Error, RMSE

# NOTE: nothing needs to be filled in here, so this function can be used as-is

predRMSE <- function(...) { # NOTE: these ... are ellipsis, so DO NOT fill in something here!

# Get prediction errors

err <- predictionError(...) # NOTE: these ... are ellipsis, so DO NOT fill in something here!

# Compute the measure of model fit (here the Root Mean Squared Error)

RMSE <- sqrt(mean(err^2))

# Return the measure of model fit

return(RMSE)

}Third, function transformParameters (copy from template

6):

# Define a function that allows for transformation and back-transformation

# NOTE: nothing needs to be filled in here, so this function can be used as-is

transformParameters <- function(parm, trans, rev = FALSE) {

# Check transformation vector

trans <- match.arg(trans, choices = c("none","logit","log"), several.ok = TRUE)

# Get transformation function per parameter

if(rev == TRUE) {

# Back-transform from real scope to parameter scope

transfun <- sapply(trans, switch,

"none" = mean,

"logit" = plogis,

"log" = exp)

}else {

# Transform from parameter scope to real scope

transfun <- sapply(trans, switch,

"none" = mean,

"logit" = qlogis,

"log" = log)

}

# Apply transformation function

y <- mapply(function(x, f){f(x)}, parm, transfun)

# Return

return(y)

}And fourth, function calibrateParameters (copy from

template 6):

# Define a function that performs the calibration of specified parameters

# NOTE: nothing needs to be filled in here, so this function can be used as-is

calibrateParameters <- function(par, # parameters

init, # initial state values

fun, # function that returns the rates of change

stateCol, # name of the state variable

obs, # observed values of the state variable

t, # timepoints of the observed state values

calibrateWhich, # names of the parameters to calibrate

transformations, # transformation per calibration parameter

times = seq(from = 0, # times for the numerical integration

to = max(t), # default: 0 - max(t)

by = 1), # in steps of 1

... # NOTE: these ... are ellipsis, so DO NOT fill in something here!

# these are the optional extra arguments past on to optim()

) {

# check names of parameters that need calibration

calibrateWhich <- match.arg(calibrateWhich, choices = names(par), several.ok = TRUE)

# Create vector with transformations for all parameters (set to "none")

tranforms <- rep("none", length(par))

names(tranforms) <- names(par) # set the names of each transformation

# Overwrite the elements for parameters that need calibration

tranforms[calibrateWhich] <- transformations

# Transform parameters

par <- transformParameters(parm = par, trans = tranforms, rev = FALSE)

# Get parameters to be estimated

par_fit <- par[calibrateWhich]

# Specify the cost function: the function that will be optimized for the parameters to be estimated

costFunction <- function(parm_optim, parm_all,

init, fun, stateCol, obs, t,

times = t,

optimNames = calibrateWhich,

transf = tranforms,

... # NOTE: these ... are ellipsis, so DO NOT fill in something here!

) {

# Gather parameters (those that are and are not to be estimated)

par <- parm_all

par[optimNames] <- parm_optim[optimNames]

# Back-transform

par <- transformParameters(parm = par, trans = transf, rev = TRUE)

# Get RMSE

y <- predRMSE(p = par, y0 = init, fn = fun,

name = stateCol, obs_values = obs, obs_times = t,

out_time = times)

# Return

return(y)

}

# Fit using optim

fit <- optim(par = par_fit,

fn = costFunction,

parm_all = par,

init = init,

fun = fun,

stateCol = stateCol,

obs = obs,

t = t,

times = times,

...) # NOTE: these ... are ellipsis, so DO NOT fill in something here!

# These are the optional additional arguments passed on to optim()

# Gather parameters: all parameters updated with those that are estimated

y <- par

y[calibrateWhich] <- fit$par[calibrateWhich]

# Back-transform

y <- transformParameters(parm = y, trans = tranforms, rev = TRUE)

# put all (constant and estimated) parameters back in $par

fit$par <- y

# Add the calibrateWhich information

fit$calibrated <- calibrateWhich

# return 'fit'object:

return(fit)

}After defining these 4 functions, we can get started with the actual calibration. For this we need to make some choices, e.g. which parameters to calibrate, and what are the transformations needed (no transformation, logarithmic transformation, or logit transformation, see explanation in template 6). Because all parameters in this model should be positive, let’s use a log-transform in the calibration routine.

Let’s calibrate only the parameters “NCONC1”,“GMAX1” and “MORT1” of

the mini-model, based on the dataset dat and the

measurements (and thus state) “BC1”. We only have to simulate to the

maximum time of our dataset dat (thus

max(dat$time)). Note that the calibration may take some

time (several seconds/minutes) to complete:

# Perform the calibration

fit <- calibrateParameters(par = ParmsNUCOM(),

init = InitNUCOM(),

fun = RatesNUCOM,

stateCol = "BC1",

obs = dat$BC1,

t = dat$time,

times = seq(from = 0, to = max(dat$time), by = 1),

calibrateWhich = c("NCONC1","GMAX1","MORT1"),

transformations = c("log","log","log"))We can retrieve information on the model fitting by looking at the

object fit:

fit## $par

## NDEPCO SEEDC1 SEEDC2 SEEDN1 SEEDN2 NCONC1 NCONC2 GMAX1 GMAX2 MORT1 MORT2 DECAY1

## 2.00000000 10.00000000 10.00000000 0.20000000 0.20000000 0.03634621 0.02000000 291.91637389 900.00000000 0.37558418 0.90000000 0.10000000

## DECAY2 CCONMO NCONMO EFFMO

## 0.30000000 0.50000000 0.05000000 0.20000000

##

## $value

## [1] 47.41233

##

## $counts

## function gradient

## 218 NA

##

## $convergence

## [1] 0

##

## $message

## NULL

##

## $calibrated

## [1] "NCONC1" "GMAX1" "MORT1"Explanation of these outputs are given in the optimization function explanation of Template 6:

- “par”: the calibrated parameter values. This includes all model parameters: those that are kept constant as well as those that have been calibrated;

- “value”: this is the value of the cost-function at the values as given in “par”. Here, this is thus the RMSE of the model predictions given the parameter values in “par”;

- “counts”: information about the number of iterations that the

optimfunction needed; - “convergence”: information about the convergence of the

optimfunction: a value of 0 means that the estimation successfully converged! For other values, see?optimfor the help-documentation of theoptimfunction; - “message”: a character string giving any additional information

returned by the optimizer, or

NULL; - “calibrated”: a character string with the model parameters that have

been calibrated (thus the vector passed as input to argument

calibrateWhich).

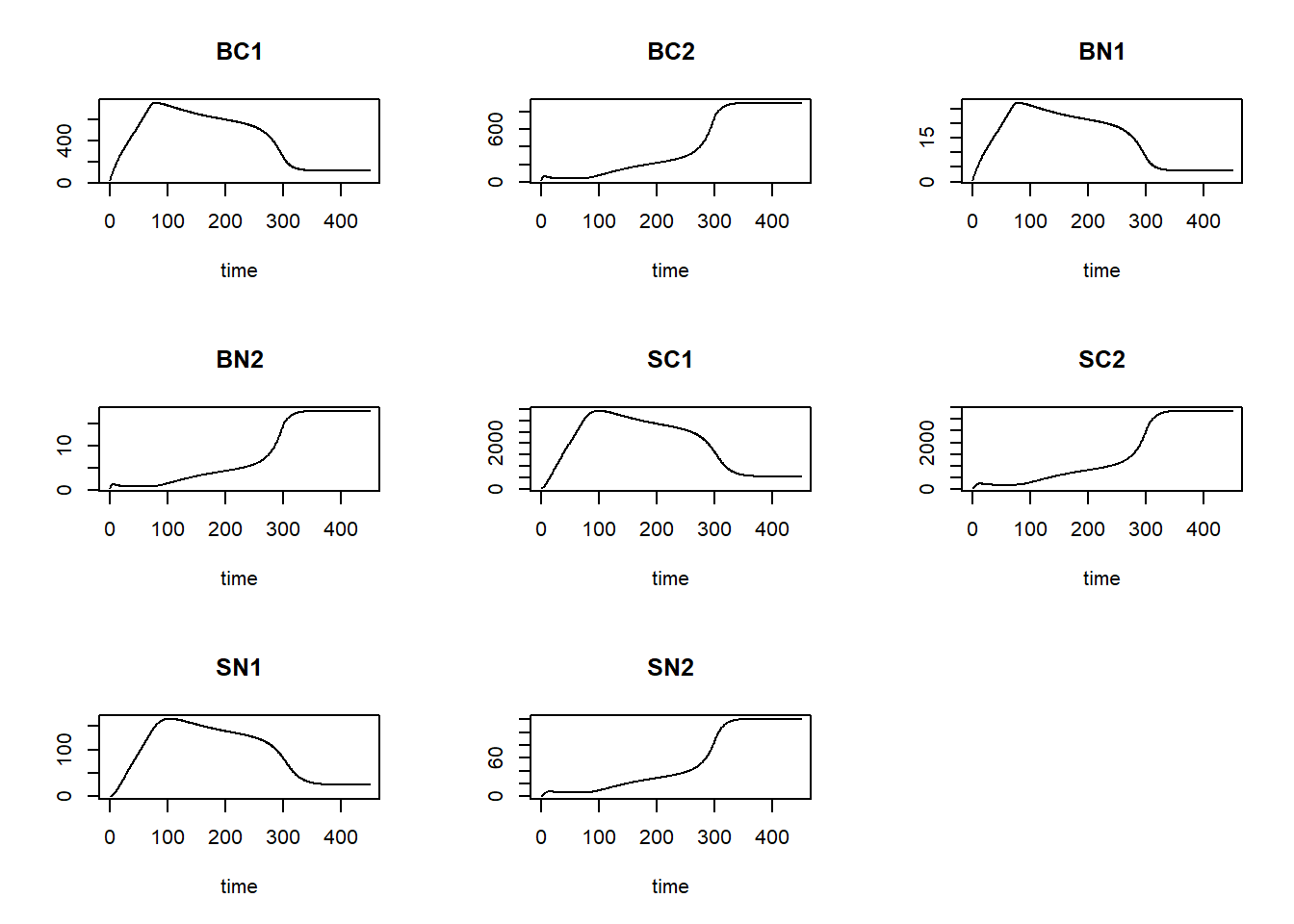

Then, we can use the fitted/calibrated model parameters (object

fit$par) to simulate the model, and plot the result:

# Fit the model using the calibrated values

statesFitted <- ode(y = InitNUCOM(),

times = seq(from = 0, to = max(dat$time), by = 1),

parms = fit$par,

func = RatesNUCOM,

method = "ode45")

plot(statesFitted)

Also, we can plot the modelled pattern of state “BC1” as function of time, including the measured values of “BC1”:

plot(statesFitted[,"time"], statesFitted[,"BC1"], type = "l", xlab = "Time", ylab = "BC1")

points(dat$time, dat$BC1, pch = 16)

The calibrated model seems to capture the observed pattern quite well! In order to quantify the difference between the calibrated model and the model solved with default parameter values, let’s compute the RMSE for both models:

predRMSE(p = ParmsNUCOM(),

y0 = InitNUCOM(),

fn = RatesNUCOM,

name = "BC1",

obs_values = dat$BC1,

obs_times = dat$time,

out_time = seq(from = 0, to = max(dat$time), by = 1))## [1] 343.1205predRMSE(p = fit$par,

y0 = InitNUCOM(),

fn = RatesNUCOM,

name = "BC1",

obs_values = dat$BC1,

obs_times = dat$time,

out_time = seq(from = 0, to = max(dat$time), by = 1))## [1] 47.41233We that the calibrated model has a RMSE that is much, much lower than that with the default parameters, thus: via calibration we have improved model fit massively!